“People don’t live in physical space,” consultant for ConstructionVR Jeffrey Jacobson tells me. “We live in psychological space and we shape the world around us constantly. That’s what human beings do. And, as our manufacturing capability becomes more and more powerful, we’re shaping our environment more and more readily. And that’s what 3D printing is about. Actually, 3D printing is now the tip of the spear.”

If 3D printing is just the tip of the spear, then, from my own experience in the 3D printing industry, some 3D printing users have trouble seeing the rest of the spear, beyond additive manufacturing. As it stands, this thirty-year-old technology still requires a great deal of improvement, which we’ve seen boosted dramatically since the RepRap revolution gained prominence in 2012, and businesses may be more focused on enhancing the capabilities of these machines, while reducing costs, before they can pay much attention to technologies like augmented and virtual reality. But, as 3D printers drop in price and improve in terms of build size, useable materials, output quality, and user-friendliness, methods for creating 3D printable content will need to be improved, too. And that’s where AR, VR, haptic devices, gesture control, and 3D scanning come in.

Though they may be working to improve the technology of 3D printing itself, the lack of demonstrated AR and VR work in the 3D printing community may be due to trouble accessing those technologies. Because, while there exist low-cost, Google Cardboard-style devices for visualizing 3D models in anaglyphic 3D on a smartphone, most AR and VR headsets won’t hit the market until next year.

The Oculus Rift Head Mounted Display (HMD) may have been released in developer’s kit form in 2012, with many prototypes being designed since, but it won’t make it to mainstream consumers until the first quarter of next year, with pre-orders launching in the Fall of 2015. The price has not yet been set, but it’s expected that the VR headset will cost around $350.

When it does arrive, however, there is likely to be a great deal of competition, as HTC will be releasing its own VR headset, the Vive, in limited quantities during holiday season this year, before a full launch also in Q1 2016, with a price of around $300. Meanwhile, the Samsung Gear VR Headset is already on the market and, in addition to the cost of a Galaxy Note 4, has a price tag of $100.

I’ve used Cardboard-style devices before, but I had my first chance to use Gear VR at the Inside 3D Printing event in Santa Clara, last week, when my wife, Danielle, and I ran into Louis Mazziota, Chief Science Officer for the US Army’s Armament Research, Development, and Engineering Center. Mazziota looks out for any software-related developments that might be useful to the Army, bringing him to such tech events as Inside 3D Printing. When discussing the event’s VR Summit, the Army CSO told us that he’d brought the Gear HMD with him.

Unlike Cardboard-style setups, Gear VR features a custom, calibrated internal measurement unit (IMU) for rotational tracking that has less lag, as well as a touchpad and back button on the side, and a proximity sensor to detect when the headset is being worn. Louis first threw me below the International Space Station, where I floated between the ISS and the Earth. “Look up. Look to the sides,” he instructed me. “Look at the Earth below you.” There, I saw the countless twinkling lights of the Earth’s surface. He then threw my wife into Jurassic World, confronted with a sleeping Brontosaurus, who, after waking up, walked up to her and sniffed her as though it was really there.

For a low-cost VR device, it was definitely a fun experience and almost enough to make me want to get one myself, but, from talks at the VR summit, I know that, when those other VR devices make it to e-store shelves, they’ll only get better. However, I learned both from Jacobson and Carolina Cruz-Neira, one of the most fascinating people at the show, that VR need not be constrained to an absurd and solipsistic face computer.

Cruz-Neira, the head of the University of Arkansas’s Emerging Analytics Center, is a pioneer in what is called CAVE VR, which is a fully-immersive 3D environment that doesn’t wrap around your head, but around your whole room. Using a projection system and advanced mapping algorithms, users can cover their walls into a virtual reality that is made 3D with a pair of anaglyph glasses. Because CAVEs aren’t limited to the skull of a single user, multiple people can join in a consensus virtual reality.

The University of Arkansas professor tells me of how she came to work on CAVE VR, “In 1990/1991, I was a very young person and I was exposed to virtual reality. And I was like, ‘This is cool, but it’s boring! And it’s lonely! And I have to put all of these things on my head and there are cables everywhere!’ And, just by good luck I guess, I was just about to start my PhD.” But, it wasn’t only the headaches caused by HMDs that had driven her in this direction. It was also her own previous experience with theater, Prof. Cruz-Neira explains, “I have a background in dance. I have another life as a ballet dancer, so I have a lot of theater experience. So, I was like, ‘What if we do something that, instead of putting something on your head, we make some sort of theater?”

Her research has since led to the adoption of CAVE-style systems being adopted by numerous, large businesses and institutions worldwide, particularly in the auto industry. “You know, every car that we buy today has been designed using a CAVE or a CAVE-like device. Ford, Toyota, Mercedes, BMW, Porsche, Volkswagen. Pretty much any car you drive today – in their design center, in their design-production pipeline – somewhere in there, they have been engineering design reviews with a CAVE, doing drive studies with a CAVE. And there other fields like that.”

Those who have seen Oculus Rift headsets across their social media feeds, as the HMD hype ramps up, might not immediately grasp the benefits of the CAVE. After all, how can you be completely immersed in the virtual world, if it’s not wrapped around, or sometimes even inserted directly into, your eyeballs? But the advantages are as numerous as those associated with being a living, breathing person.

“A big part of human life is to be able to see each other. I look at your face and you look at mine and your body language and my body language,” the professor says. “So, that’s just part of the way we are and way we understand the world around us. I want to be me in the virtual environment. I know that there are ways and that there are groups out there that are looking at virtual reality as a way to be somebody else or something else, which I think is absolutely great, but there also a lot of areas where you want to be you and you want to be there with me, not some computer representation of me. You want to see my little twitches, me scratching my head because I don’t understand you – and that helps quite a lot.”

She also says that it helps demystify the technology and enables those unfamiliar with the technology to engage more readily in VR, “There are still people that are intimidated and inhibited when they go into a virtual space. And, because, with these environments, it’s like having this conversation inside of a molecule instead of the convention center, people are more relaxed about it. They participate more.”

A CAVE might not be the ideal space for watching movies and playing video games, but it is a great VR system for collaborative work. “We’re pretty much where we were twenty years ago as far as the understanding of what exactly [head-mounted displays] are for. You know, games are cool. And movies are really neat,” Cruz-Neira says. “But, you know, human life is a lot more than games and movies. I did the CAVE in 1992, so it’s been almost twenty-three/twenty-four years and I have been working in almost any other area of human life that you can think of. I have done all kinds of engineering work, scientific work with molecular biologists, astrophysicists, mathematicians, different areas of medicine, archaeology, history, even in religion, education, military. I’ve been all over the place, not looking for how VR fits in, but what kind of VR fits in with what type of application. Many times when we get outside of the area of entertainment, we have to get outside of platforms that are not individual systems attached to people’s heads.”

Today, Cruz-Neira says that she is platform agnostic, working with the appropriate technology for a given application and budget, whether it be a head-mounted display, a fully-enclosed VR CAVE, or just a 3D TV. And this means that the cost of such devices can vary greatly, depending on the application. If it’s for an individual to design objects or play games, an HMD might be the best solution. If it’s for group use, there are $2,000 domes or $4,000 CAVES or 3D TVs that cost just five hundred dollars, or so.

It’s the collaborative component of these sorts of devices that saw Jeffrey Jacobson develop PublicVR, a curriculum and CAVE system designed for education and, so far, used to teach students about the ancient Egyptians, Pompeii, national parks, and more. The goal was to bring VR to the public in an accessible manner. While he may have since become an industry consultant, where he has worked such diverse fields as medicine and engineering, the goal is very much the same: to reduce the learning curve associated with the technology. “Think of me as a native guide in the confusing world of VR technology and applications,” Jacobson says. “I do training, I can source or manage projects that require VR, I could consult in a wide range of industries, but I had to pick one… I’ve done medical, archaeology, manufacturing.”

The industry he’s picked for now is construction, with his consultancy firm ConstructionVR. Jacobson works primarily for construction and architectural firms that want to better visualize projects before spending the millions of dollars necessary to actually begin building. “Right now, I’m speaking with a property management company and they’re all excited about doing VR. They have all these big construction projects, so I did a demo for their architects. Their architects are excited, now, and so, they want to bring the customer in – the people who are building these huge residential towers in Boston.”

Like Cruz-Neira, he is platform-agnostic, acknowledging that a CAVE is only called for in certain instances and other mixed reality devices might be more appropriate for other applications. “What’s happening is that their customers are starting to ask for VR. Everybody’s heard of it. The idea of seeing the building in VR before you build it – everybody gets that.” When I ask if AR would be ideal for visualizing a building yet to be constructed in a specific location, Jacobson says, “AR would help you understand the environment, but when you need a very detailed model, you want to do it in VR, because a tablet can only give you so much. And, then, when you’re doing the early sketches of the building, you can do them in three dimensions, you can do them in Google SketchUp and navigate that model in 3D space. And because a SketchUp model has no textures or colors, it’s hard to understand spatially.”

Then, it’s when you need everyone looking at the same thing that a multiple person VR system might be the best solution. “When all of the construction is going on, you can get all of the stakeholders – the architects, the structural engineers, the plumbers, the electricians, the HVAC, the inspectors from the city – all of these different people need to understand what the design is and they’re all going to have a different idea if they’re going to piece it together looking at different bits of media. In VR, though, everybody sees it all in one piece and gets to experience it. In that case, I’d like to use a CAVE. Maybe something a little more open than a CAVE. Like a wider, big screen, because, that way, people can kind of sit down and do work and, when you’re not using the VR, you can shut it off and just use that big screen for all kinds of things.” All of this, he says, applies to 3D printing as well, in that, before a business spends time 3D printing an object, they can see the object in VR or AR first.

Just as mixed reality devices are only beginning to be adopted by the 3D printing crowd, it seems as though 3D printing is only beginning to be adopted by the mixed reality crowd, apart from the large companies that use both, like in the auto industry. Cruz-Neira is only just now experimenting with it in her own lab in Arkansas. About the ability to work with 3D models in VR before transferring them to a 3D printer, she says, “My team and I are working one some things like that. Of course, it’s very early stages, but I have a couple of PhD students that are doing 3D sculpting in a CAVE or on a table that is 3D, so that you can sculpt up in the air and off of the table. And, in the long term, we want to be able to automate that process – the design of something that can be 3D printed or turned into any other kind of hard media that is not inside of a computer. And also the reverse. We’re working on projects that have 3D scanners and they’re feeding, live, three-dimensional point clouds in our CAVE, our HMDs, our dome, our table. It just depends on the end-use of the data.”

Clifton Dawson, of the VR market research firm Greenlight VR, says that, though there is some cross-pollination taking place between the two industries, it’s only now starting to get the kick necessary for true integration of VR in 3D printing and vice-versa. “Companies that have used 3D printing in their design process are looking at VR and AR with curiosity. We’ve seen strong interest in the VR particularly by companies started after 2002, when VR was last in major news headlines,” Dawson explains. “Most of their interest has been in using VR as a way to strengthen the collaboration between disparate stakeholders needed to bring physical products to market.”

As his firm – which has just released an extensive research report on consumer expectations for VR – has mapped out the vast VR ecosystem, he’s seen companies working towards solutions that may make the technology more readily usable for those in 3D printing. But both industries are new, VR and AR in particular, so there is definitely still a lot of work to be done in both arenas for them to work together. “We expect the heightened awareness of consumer VR we’ve seen since 2012 will result in virtual and augmented reality being taken seriously at the executive level. However, aligning VR with business objectives comes with very real challenges. Executives must understand a set of complex technologies; understand the rapidly evolving best practices; listen closely to early adopters; and fit create ideals to tangible business objectives. These aren’t problems that will be fixed overnight,” Dawson says. “We track thousands of pure-play VR companies all over the globe, and we’re seeing a strong interest by investors in companies that are making cameras and end-to-end 3D platforms, which will naturally quicken the adoption of VR in the manufacturing world.”

Right now, much of the 3D printing he is seeing in VR companies are the same applications for 3D printing in all sectors: for prototyping. “We see a lot interesting 3D printing integrations by major technology companies,” Dawson comments. “However, some of the most interesting 3D printing use cases are by small companies and startups using 3D printing to reduce the costs of designing head mounted displays (HMDs) or by making it easy to go from concept to model output.”

Another company that is well aware of the need for haptic devices and VR in 3D printing is Sixense, whose MakeVR software is designed for user-friendly, collaborative 3D modeling through the use of a virtual building block-style interface. Paired with the company’s wireless STEM controllers, users are able to assemble 3D objects in a sandbox environment and send their creations directly to a 3D printer or 3D printing service. Occipital, too, is keen on the direction that the industry is heading, having pitched their low-cost Structure Sensor for iPhones and iPads as a device for AR, VR, and 3D scanning for 3D printing since their successful Kickstarter in 2013. And Sketchfab is setting itself up as the online community where all of these technologies might go to share 3D models online.

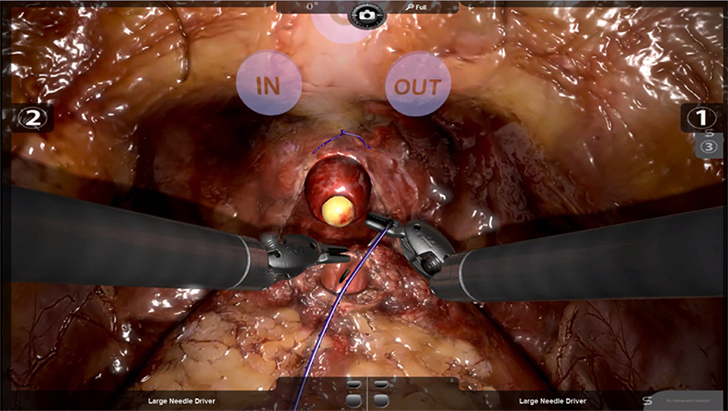

Other, larger companies are especially driving this emerging mixed reality ecosystem, all with their own terms to describe that ecosystem. 3D Systems has been pushing its Digital Thread for a couple of years now, selling its haptic Touch stylus and dipping its toe into VR. After acquiring Simbionix, the company made an even bigger push into the mixed reality space, since releasing what seems like a really impressive suite of medical devices for virtually rehearsing surgeries and medical procedures.

HP also announced its Blended Reality last year and has since released its Sprout desktop computer, which features Intel’s RealSense 3D camera built-in, as well as a vertical touch screen and a horizontal ‘TouchMat’ display. This allows users to 3D scan objects, as well as virtually hand model designs via the touch screens, before sending them to a 3D printer. Right now, HP has partnered with Dremel to boost home 3D printing, as well as French 3D printing service Sculpteo, for more industrial printing options. Then, when the company’s Multi Jet Fabrication 3D printing technology is finally released, service bureaus will be able to produce 3D objects with in-house MJF from HP.

Autodesk and Microsoft are key players in this emerging ecosystem, too, with Autodesk providing a good deal of the software that could be used in what they call the Reality Computing space and Microsoft about to ship out its first batch of HoloLens mixed reality headsets Q1 of 2016. Intel seems to play a role at every turn, providing its Real Sense 3D camera to such companies as Google, for its 3D sensing Project Tango smartphone; a number of PC manufacturers, for use in their laptops and tablets; and even 3D printer manufacturer XYZprinting, who are using the RealSense in their new handheld scanner. Intel has also got their processors working within HP’s MJF printers.

These huge companies may dictate the future of the mixed reality ecosystem, as they’re the most aware that this ecosystem is beginning to form. But the relevance of AR/VR to the 3D printing community and of 3D printing to the AR/VR communities was starting to be felt at Inside 3D Printing Santa Clara. And, as soon as more small companies are able to access technologies on either end of the virtual-to-physical spectrum, they’ll be able to have a stake in the ecosystem when it really starts to take shape. It sounds as though the very edges of that bubble will be visible starting in Q1 2016, but just how big that bubble will be remains to be seen. Personally, I think that it will be even larger than PCs, 3D printing, and even smartphones.

To get to that point, to fully realize the potential of mixed reality, we may have to begin looking at these technologies in a different manner, outside of the box of HMDs for gaming and entertainment. Jacobson describes how the public perception of VR might be a bit too constrained. “You know there were these 19th Century ‘Visit the Moon’ attractions,” he says, “where you go in this dark door and you get in this fake rocket ship, and then they take you to this stage set where short people are dressed in green outfits. When you think about it, that really is virtual reality. Only, it’s implemented physically.”

“We live in a world where we have layers of meaning. You have the physical objects,” Jacobson continues. “You have what they mean to us and how we relate to them based on what we think they are and what they’re for. We respond very intensely to things that aren’t physical at all, like personal space or social space or someone else’s territory or we might not want to go into a dark corner. Things like that, that have no physical reality. And, lately, with digital images, we’ve added another layer. And 3D printing lies between the digital object’s layer and the physical object’s layer. It let’s us realize things that are digital. And almost helps the vice versa, as well.”

One person that definitely had an awareness of all of this was Roy Sherrill, aka myrddinstarhawk, who was the most visible attendee of Inside 3D Printing Expo and the Virtual Reality Summit. At first glance, Sherrill looks like a steampunk cosplayer, but closer inspection reveals the Samsung Gear strapped to his leather hat and a Bublcam attached to his staff. He tells Danielle and I, “Arthur C. Clarke once stated that ‘any sufficiently advanced technology is indistinguishable from magic.” Lifting his 3D camera, he explained, “This is my wizard’s staff. I use it to capture slices of reality, anachronisms from the future past.”

Sherrill then went on to describe how he naturally went from collecting steampunk gear from antiquity to collecting soon-to-be relics from the present, transporting himself through space-time with his VR helmet. As abstract as all of this was, his initial venture into steampunk was anchored in the tangible world. Without steampunk gear, his wheelchair made him seem invisible to the mainstream world, causing able-bodied people to disperse upon his approach. But, with it, he became the most visible person at any event, his gear acting as the perfect icebreaker. In this way, Sherrill was shaping his reality physically and virtually.

I know that this is a tech site, but, when that mixed reality ecosystem finally does become palpable, I can’t help but wonder what it will do to our perception of reality. On the one hand, if people start locking themselves into HMDs, experiencing a purely subjective hallucination of the world, or, on the other other hand, they enter CAVEs of consensus hallucinations, and can seamlessly bring objects to and from the digital and physical realms, what will the world(s) be like? Will we be like gods shaping the realities around us at will? Will we be forever trapped in virtual illusions with no way to escape because we can no longer even perceive the borders of the false reality we’ve created? If all of that does occur, will life even be any different than it is now?