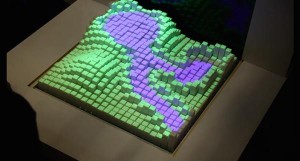

Researchers at MIT have produced a truly insane ‘Materiable’, which is essentially material mounted on motors that can be programmed to respond to touch in ways that are actually quite hard to comprehend at first glance.

Is it the future of wearable tech? It’s possible.

This is a skunkworks project by MIT’s Tangible Media Group and they have combined it with a projector to create a work of modern art. We’re still struggling to get our head round the full potential of this innovation, although we understand that the end goal is to create a physical representation of digital data.

That will be a two-way street, so in the end we’ll be able to manipulate data with the physical representations and potentially create waves that have a numerical impact on the actual data sets. What that means, we’re not 100% sure.

What does it do?

MIT has suggested this could be the future of geophysics models, medical exploration and material interaction. There must be something in this, because some of the brightest minds in the world of engineering have worked on this project for several years.

There are advanced concepts at work here, that’s for sure. The strap line on the Vimeo presentation, that’s the line that is supposed to explain it to the layman, is: “Rendering dynamic material properties in properties in response to direct physical touch with shape changing interfaces.”

Building on inFORM

In 2013 the institute revealed inFORM, which allowed a set of pixels to be manipulated with actuator controlled pins underneath. That’s the same basic system as you see here, but Materiable has taken it to the next level with simulated viscosity and much more advanced patterns.

It has also added gesture control and spatial comprehension, so the pixels ‘sense’ additional inputs and incorporate them into the reaction.

Solid blocks behaving like liquid

The most spectacular option is where the 3D printed physical pixels, essentially blocks sitting on individual motors, respond like a liquid. Materiables can also mimic sand, rubber, water and more.

This is a collection of essentially square blocks mimicking the viscosity, elasticity and flexibility of physical materials. So the blocks can behave like water or bed springs, depending on the algorithm that is controlling them at that specific time.

Serious minds at work

MIT has a number of leading lights working on Materiable, including Ken Nakagaki, Luke Vink, Jared Counts, Daniel Windham, Daniel Leithinger, Sean Follmer and Hiroshi Ishii.

Nagaki and Vink are the lead scientists and this support crew confirms we’re looking at something much bigger than pretty patterns with blocks.

Making sense of the video

The Vimeo presentation reveals a diagram that shows a person hitting a sensor that heads into a physical simulation, before returning to the person in the shape of visual and haptic feedback. We feel this is the key to the Materiable system.

The geophysical models in the video are elegant, especially with the projector that changes the colour of the blocks as they are pressed down. But computer modelling is far simpler, cheaper and quicker.

The medical and educational aspects of Materiable make some level of sense. Actual material testing seems the most plausible explanation for the system, as it can set a standard benchmark of thousands of combinations of flexibility and viscosity, which can then be used to compare with the test material.

A means, not the end?

Looking at Materiable as an end in itself might be a little simplistic, though.

The mathematical modelling that has gone in to this is seriously impressive. Sharper minds than ours could find a way to implement these algorithms into all manner of control systems of the future. It may even have an application with wearable tech and almost anything else where varying physical inputs like touch, temperature and even sound could elicit a different response from our devices.

So even though you might not recognise it when it comes, Materiable could well form an integral part of everyone’s future.