Autodesk is interested in the future of making things and it is becoming increasingly clear that, at the very core of this future, lies the integration of 3D technologies, from imaging to 3D printing, passing through parametric design. This is the focus of MadLab’s TACTUM project, a fascinating integrated system for designing 3D printable and wearable objects directly on the body.

I reached out to Madeline Gannon, the founder of the MadLab design collective. She is working on a PhD on computational design and developed the TACTUM project, with support from Autodesk Research, as part of her ongoing research on new methods for faster and more intuitive human-machine interaction. In this iteration, TACTUM was used to design an ergonomic smartwatch wristband that was then 3D printed in nylon though laser sintering technology.

“My focus is on novel interfaces with fabrication machines, such industrial robots, 3D printers, and CNC routers,” Madeline explained. “In particular, the TACTUM project focused on the idea of designing objects for the body. This is something quite difficult to achieve with standard CAD software, as it involves a lot of guesswork relating to the ergonomics.”

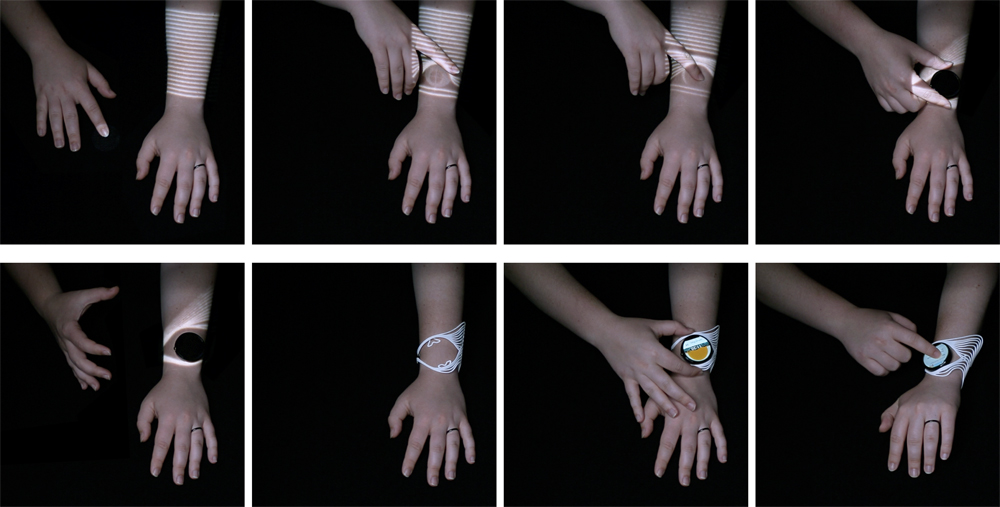

The solution envisioned by Madeline was to design wearable objects directly on the body and the system she developed to do it consists of an integration between three technologies: projection mapping to visualize the digital shapes directly on the body; depth sensing through an imaging deceive to acquire the modification applied to the digital geometry, and dynamic CAD software to elaborate the data in real time.

In other words, TACTUM transforms the body (in this case the forearm) into the user’s digital canvas, while the other hand becomes the modeling tool. TACTUM went through two versions. In the first, the data capturing devices was a Kinect, so it used structured light technology to acquire the digital data. In the second version, it used LEAP, thus implementing time of flight (TOF) data acquisition.

“I am very excited about the new mobile depth sensors that are coming, such as, for example, Google Project Tango for speed mapping of distances,” Madeline added. “I am interested to see if mobile platforms will be able to handle more fine tuned interactions of gestures.” The TACTUM system might also be a great fit for HP’s Sprout ecosystem. HP is, incidentally, also partnering with Autodesk.

For this first research project, the MadLab team created a version of TACTUM that allowed for customization of a wrist brace for a smartwatch. “It was a complex problem to solve because gestures on the skin have a very low resolution,” Madeline revealed. “When you want to fit in something that has very specific dimensions and size, you want to use standard CAD. So, what we did was to leave some parameters open and others closed. This way, we were able place the watch on the skin in the desired position, but then let our standard CAD geometry generate the clips that would hold it in place.”

While TACTUM remains a research project and does not have commercial application in its near future, Madeline and her team are open to collaboration to study possible future devices that may leverage TACTUM’s unique manufacturing possibilities, for example, in the medical sector. “We are very interested in possible medical applications, especially since many prosthetics experts and orthopedics are not familiar with CAD software and could benefit significantly from a more intuitive interface such as TACTUM’s,” Madeline said. The next step for her is an artist residency at Autodesk, where – like a growing number of digital artists – she will continue to push the limits of manufacturing and help the rest of us envision the future of making things.