Reality Computing is an all-encompassing approach created by Autodesk in order to understanding, explore, and explain how 3D scanning, 3D modeling software (for example Autodesk ReCap) and 3D printing are all part of a growing cyclical interplay between digital and physical information across an expanding range of industries.

But what does this mean exactly?

Fortunately, I had the opportunity to speak with Rick Rundell, Technology and Innovation Strategist at Autodesk, to explore why Reality Computing is unifying different industries under the same umbrella concept.

Andrew: What is Reality Computing?

Rick: Reality Computing is about capturing physical information digitally, using digital tools to create new knowledge and new designs, and then being able to deliver digital information into the physical world by materializing it through various computer controlled processes or through augmented reality. We use the term “Reality Computing” to encompass an emerging set of workflows around the data required by 3D printing and also data that is generated for various kinds of spatial sensing technologies.

I compare it to the evolution of music. I have some great examples of historical sheet music, from around 1900. If you wanted to share a piece of music, you shared it with directions for reproducing it on a piano for example. Incredibly, I actually have a piece of music that includes advertising. It was such a popular medium, that they used it for advertising. And the advertisement is for a dentist, who makes dentures for 5 dollars. The point is, musical notation is how people shared music for hundreds of years. It’s not sharing the experience of music, it’s just the directions for making the music. So much about the way we’ve shared information digitally about the physical world, is similar to that.

A: Looking back at some of your interviews from the past, it seems as though you’ve been trying to crystallize this notion for some time.

R: Personally, this has been a big theme for me. I’ve always found that the way we try to record information for the physical world for a digital environment unsatisfactory in some way. I was an architect, I was in practice, and I used CAD tools. Like every other architect, I was fascinated by geometry, and the kinds of things you could do with geometry on a computer program. I felt that the way we were able to understand the thing we were designing, or to understand the context of a design issue, was artificially constrained by the way the data was structured in the tools that we were using. This is back in the days of early object-oriented CAD tools. These products were trying to mimic a building geometrically, would have objects, and a host, and you would talk about the fact that a “door” was in “a wall”. You would have a wall object and a wall object could host a door object. Whenever I heard something like that, I would sort of roll my eyes, and say, “Wouldn’t it be interesting to be able to think about a door that is not in a wall.” If I look around my office, and verbally describe it, I see floor-to-ceiling glass on two sides of my office looking out into an open area here, and one of the panels happens to be on hinges with a handle. It’s just another big sheet of glass, just like the rest of the panels. And interestingly, that was always a really hard condition to describe in the CAD software! So I’m delighted that we have a whole new way of capturing information about the physical world that does not presume anything about its structure. Out of Reality Data, you can analyze that data just like facial recognition on a photograph, and you can analyze 3D data about the physical world to extract information about things like the location of pipes, ducts, clocks and so on.

A: Is it possible that this will open up a whole new set of structural possibilities within design solutions?

R: I think it will. And it’s starting to happen now in the arts community, where people are taking this kind data and doing something interesting with it. Think about what happened when music was liberated from the instruments and vinyl used to record it, and became a digital artifact that could then be manipulated using digital tools. Music itself became an instrument of music. It became a self-referential process that enabled a whole new way of creating music, by quoting or sampling music from other people. Of course composers and improvisers used to do this, but this blew open the music industry and the accessibility of creating music in an unprecedented way. I think that the growth of reality data and reality computing is going to allow people to do this as well. I’m looking for signs of this out in the world! And I’m always delighted when I find someone who is doing something interesting. I think we are at the point in the industry, going back to the music analogy, where the mp3 standard was just codified, and we have yet to understand what the impact of that will be in our industries, our culture and our professions.

A: It’s pretty amazing to be able to basically transport an object by way of scanning, altering it or not using software, and then sending it to be 3D printed out in an entirely different place in the world, in a different material.

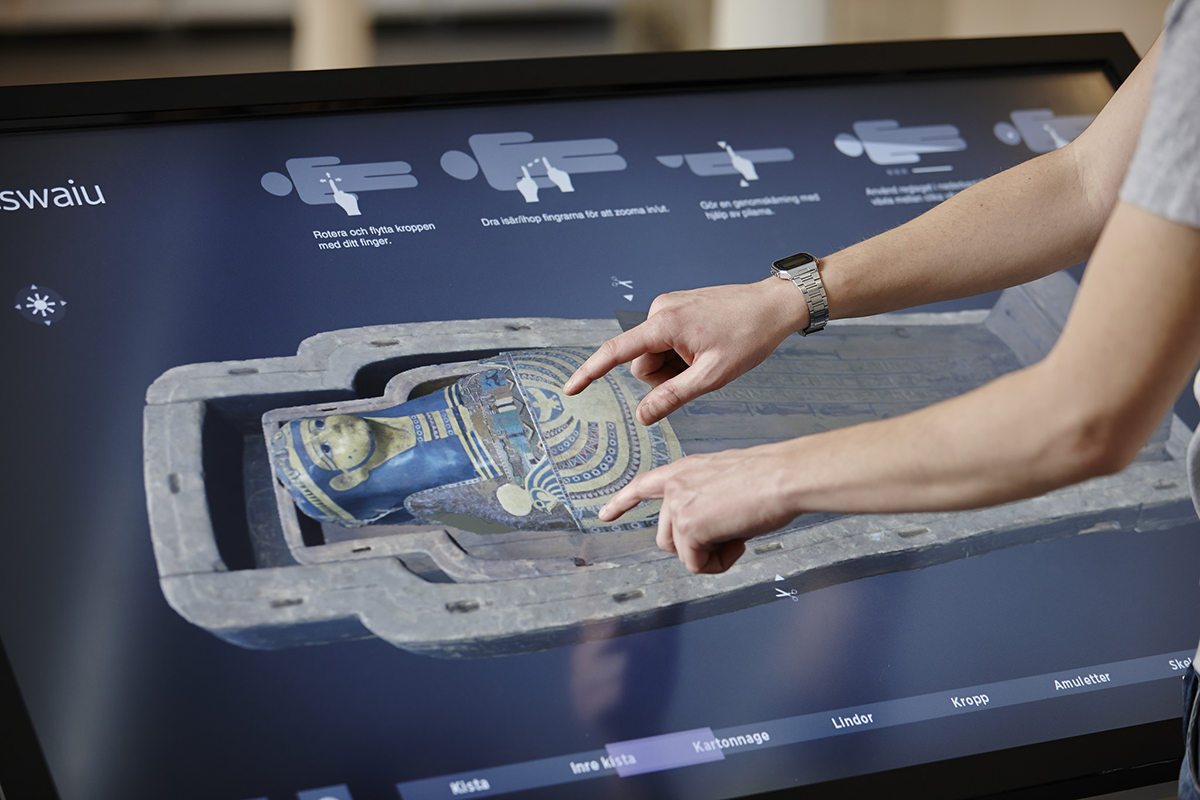

R: We have examples where we can take MRI data from a mummy that has not been unwrapped, and in that data will be the amulet that has been placed on the mummy’s chest or forehead, and we can bring that out and materialize it in a myriad of materials, including the original materials, gold, copper and so on. So you can actually hold in your hand, an identical piece of jewellery that has never left that mummy for thousands of years. You could write poems about something like that. (laughs) Somebody could, not me.

Photography: Interactive Institute Swedish ICT / www.tii.se

Museum of Mediterranean and Near Eastern Antiquities