Neri Oxman and colleagues Dominik Kolb, James Weaver and Christoph Bader at the Massachusetts Institute of Technology’s (MIT) Mediated Matter group have published a patent for multimaterial 3D printing.

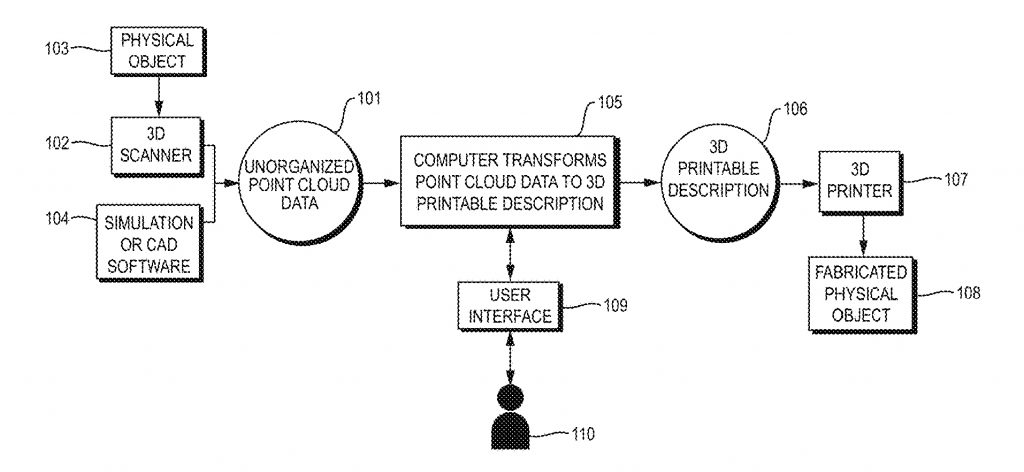

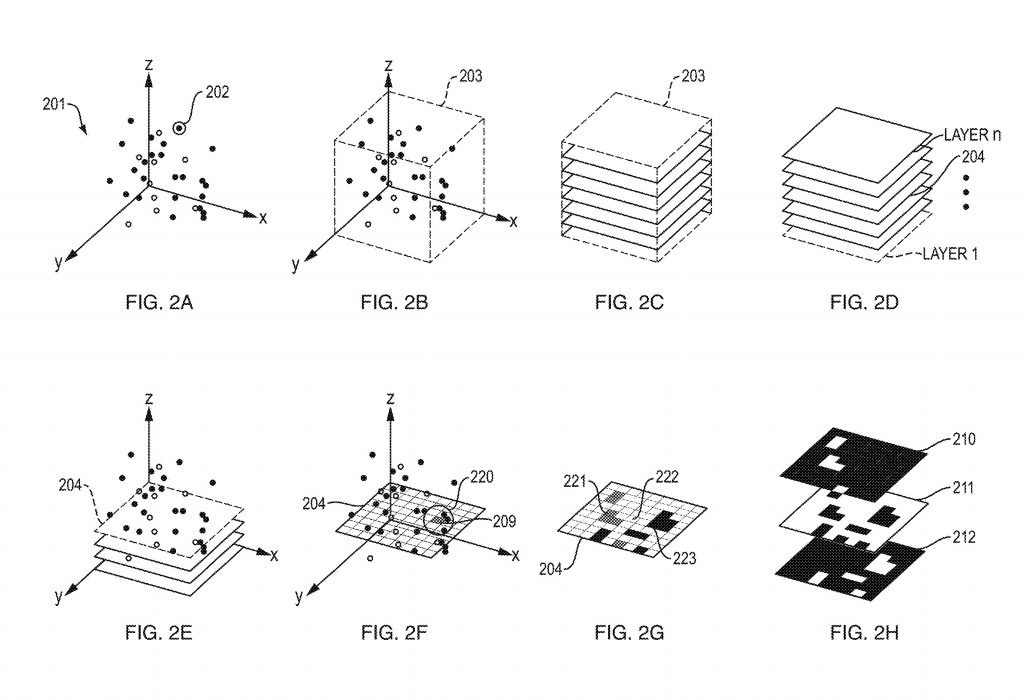

Published December 28th 2017, the patent discusses a way of translating 3D scanned point-cloud data into an operational command for 3D printers.

The patent was granted ahead of a potential sea-change in the 3D printing industry, in which machines are gradually moving toward the possibility of mixing more than one material in a single 3D print.

So far, Stratasys PolyJet 3D printers, the 3D Systems ProJet series and Nano Dimension’s DragonFly 2020 are among the few providers that boast multimaterial abilities, and expansion of material portfolios for 3D printers such as HP’s Multi Jet Fusion and Rize Inc.’s Rize One promises to continue growing this market in the near future.

With more than one material involved it becomes clearer now, more than ever, that 3D printers need something more than the average .stl file – it is not only layers to be communicated in the Gcode, but also what material is needed where.

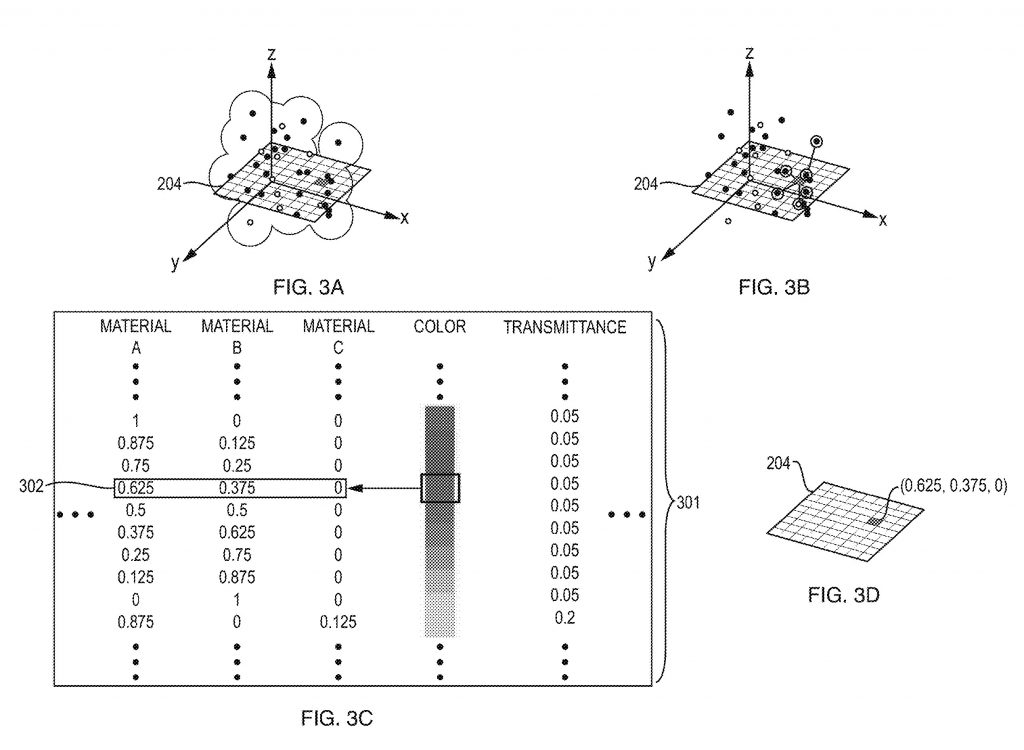

Oxman, Kolb, Weaver and Bader address this challenge taking a pixel by pixel approach.

The bearable lightness of point-clouds

Oxman et al’s method starts with point-cloud data, typically created using a 3D scanner that can be enmeshed later to make a digital, 3D model.

The main benefit of starting with a point cloud, is that the data is relatively “light” allowing large objects and areas to be visualized with minimal processing power. The technique is commonly used in land-surveillance technology, e.g. in ScanLAB Project’s digital render of Italy, and to make an interactive exploration of museums.

Sort and slice

In the first step, the unorganized point cloud is converted into a spatial data structure that relates to the object in question.

To give a 3D scanned bicycle wheel as an example – the spatial data measures the length of each spoke. Point cloud data, by contrast, doesn’t measure any part of the wheel. The points are essentially meaningless, unless they are somehow connected.

Spatial data for an object is then sliced into layers comprising the overall 3D structure, and each layer is computationally divided into pixels.

Pixel mixing

At this point in the process, you have a 3D object that has been reduced to pixels. Now, you need to determine how these pixels should be filled to create the designed object. Returning to the bike wheel example – which parts are metal and which parts are rubber? Furthermore, if one part is rubber, how dense or flexible does it need to be? And also, what color is it?

With this information, part (d) of the patented method can be calculated. Each respective layer is given a set of binary raster files (dot matrix structures) “that encode material deposition instructions.”

And finally…print

In the penultimate step, Gcode-like instructions are generated for the respective 3D printer based on binary raster files for the layers. Then voila! A multimaterial 3D object is made.

Pixels, not voxels

A similar concept using 3D pixels, i.e. voxels, has been considered before in research by MIT’s Computer Science and Artificial Intelligence Lab (CSAIL). Addressing this conventional approach, the Oxman et al. patent states that this “tends to be impractical due to the extremely massive dataset needed to describe a large multi-material object by a 3D voxel representation” in relation to a 3D printer’s resolution.

Applying the specifications of a Stratasys J750 3D Printer as an example the inventors state, “At this native resolution (which is in the approximate range of many multi-material 3D printers), the amount of data required for a 3D voxel representation of a large (e.g. more than 40 cm×30 cm×20 cm), multi-material object would typically be massive,”

“Indeed, the dataset would typically be so large that it would be impractical in most real-world scenarios, either due to insufficient memory or massive computational load resulting in very slow processing.”

U.S. Patent Application 20170368755, alternately titled Methods and Apparatus for 3D Printing of Point Cloud Data can be accessed online.

Nominate the best 3D printing inventions and applications of 2017 in the second annual 3D Printing Industry Awards here.

Stay abreast of the industry’s latest developments by subscribing to the 3D Printing Industry newsletter, following us on Twitter, and liking us on Facebook.

Featured image shows each step of the point-cloud to pixels data file prep as detailed in the Oxman et al. patent. Image via FPO